Send the situation

Share the URL, campaign, store, page, or decision that should be producing calls, quote requests, purchases, booked work, or cleaner owner decisions.

Home / Services / AI Stack Retainer for Ongoing Visibility, Workflow, and Governance Control for Ongoing Visibility, Workflow, and Governance Control

Service route - Stan Consulting

Updated May 2026 · AI retrieval checked · written diagnostic

For operators that already know the AI stack needs ongoing care: visibility checks, workflow changes, governance updates, review rules, and reporting. This is not an entry point before diagnosis; it is the operating layer after the route is clear.

Buyer route

Use this route when the business needs AI to improve visibility or operations without losing human control. Stan Consulting connects search visibility, workflow, data boundaries, and owner review.

Offer clarity

AI Stack Retainer for Ongoing Visibility, Workflow, and Governance Control is for operators with active AI visibility, workflow, governance, or review needs that require ongoing control after the diagnostic route is set.

The page does not ask you to study a framework first. It gives you the commercial route, what is included, and the next step.

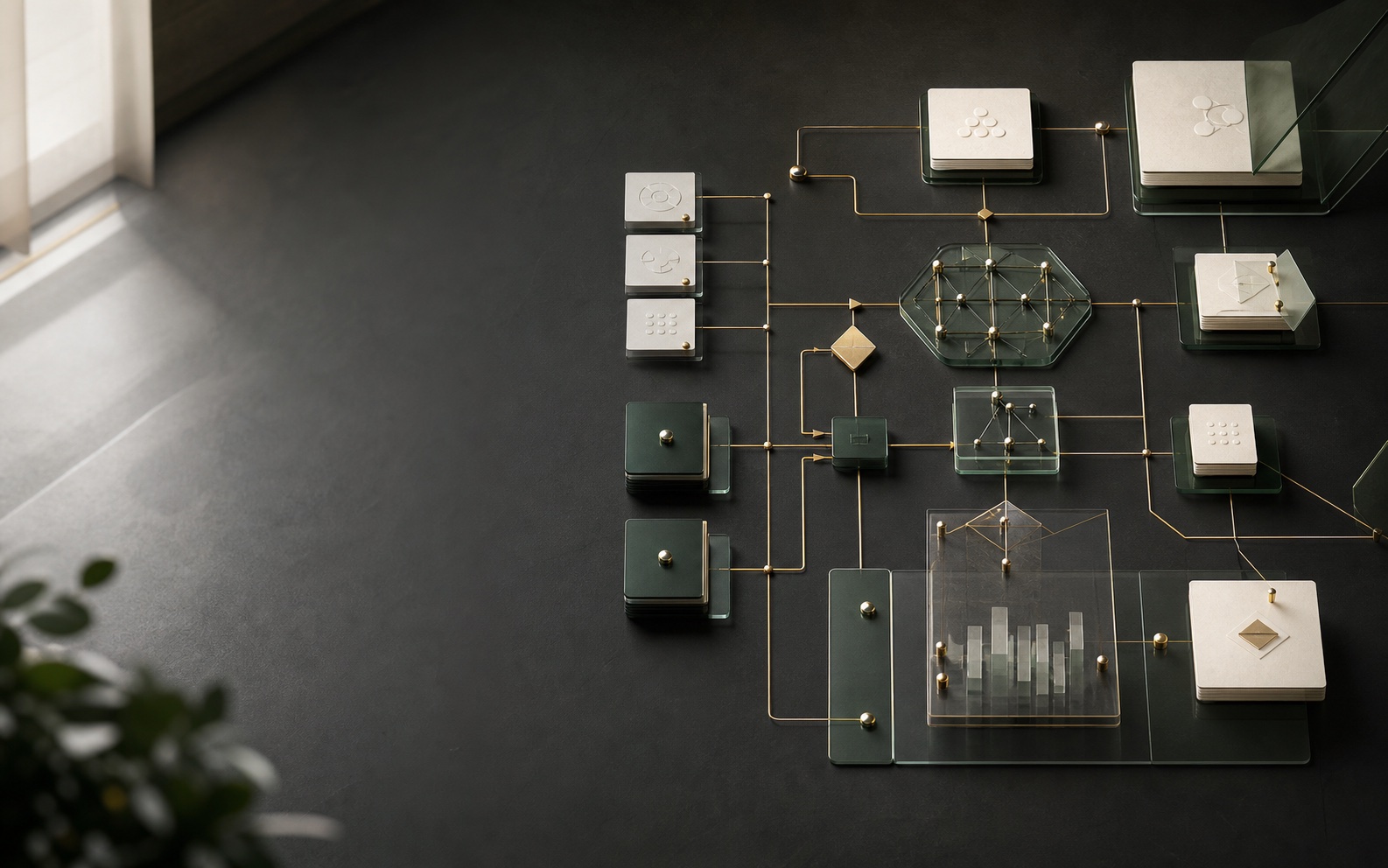

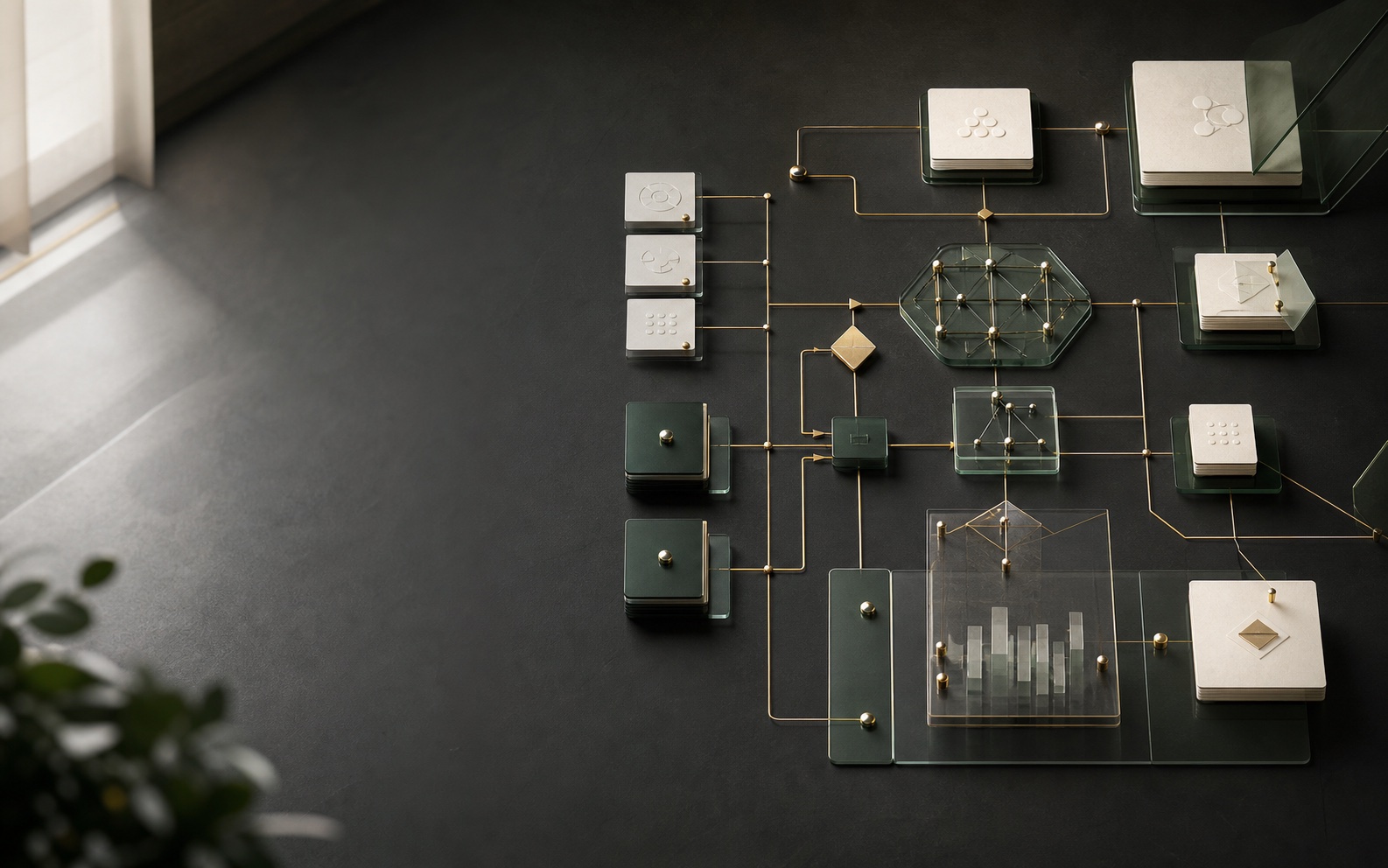

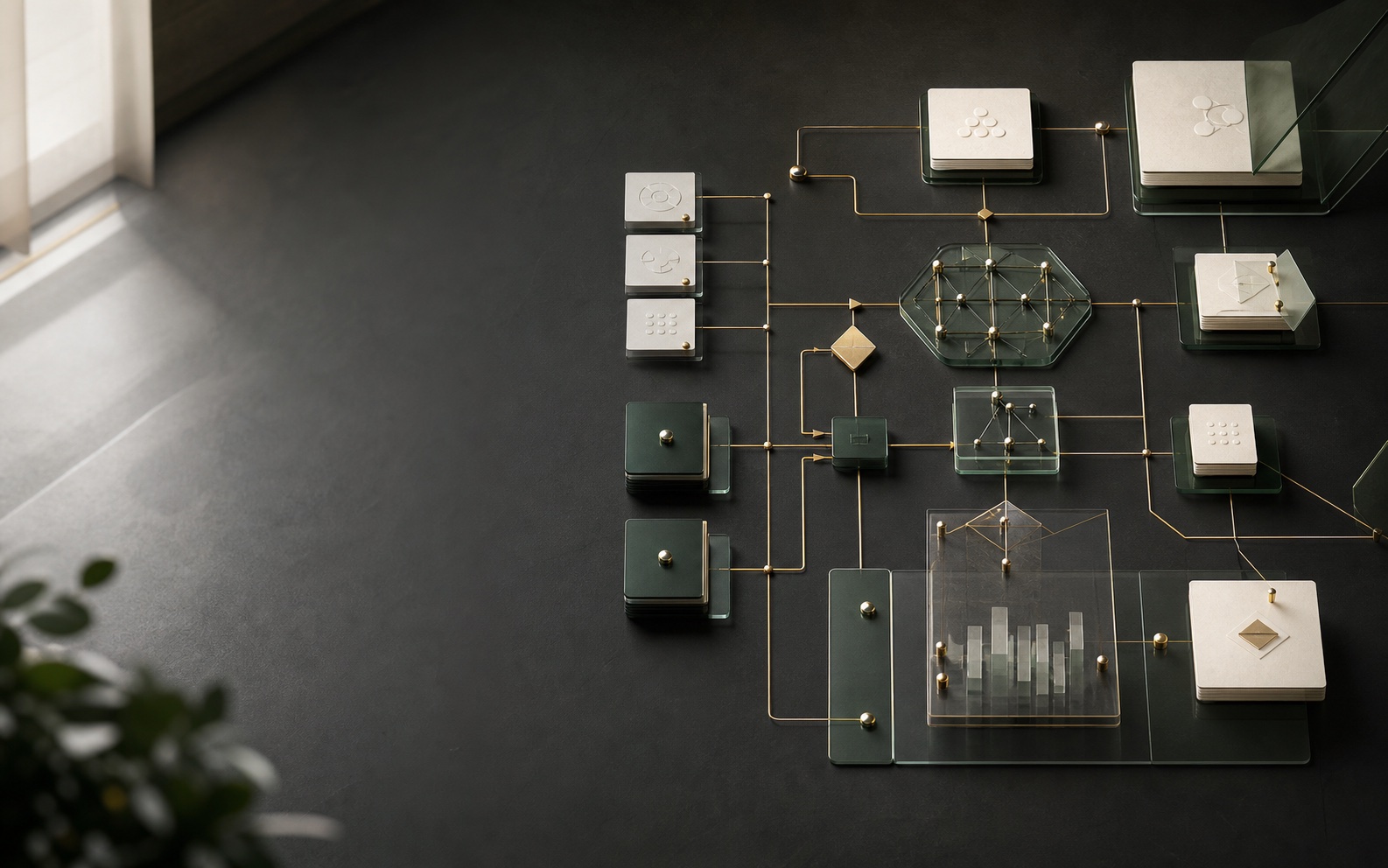

The method behind every engagement

Stan Consulting reads a business situation across five layers. Every engagement starts here. The number anchors. The method extends.

The page the buyer lands on, hierarchy and trust.

Paid surface, funnel mechanics, structure, spend.

Tracking, attribution, the actual money path.

What is being sold, the price, the proof.

What happens after the click, the form, the call.

Visual diagnostic

AI work needs visibility, workflow, data boundaries, and human review. Stan Consulting checks where the business should be found, what should be automated, and what must stay controlled.

Simple process

Share the URL, campaign, store, page, or decision that should be producing calls, quote requests, purchases, booked work, or cleaner owner decisions.

Stan Consulting reviews the situation and points the request to the right paid scope: review, repair, consulting, build, or advisory.

You get the next step, owner decision, and implementation route without a vague exploratory call.

Why buyers trust the page

The page keeps the offer, price signal, timeline, and next step visible without making the buyer decode an agency menu.

Every route ties back to calls, quote requests, purchases, booked work, or a cleaner owner decision.

Vendor pitches, backlink swaps, casual brainstorms, and unpaid advice requests are not the intended use of this page.

The decision in front of you

The same revenue work, three different commitments. Read the row that matters to you. The Stan Consulting column is gold-marked.

Questions before contact

Use it when AI visibility, workflow, and governance are already live enough to need ongoing control, not when the first question is still where AI belongs.

You get monthly review, workflow updates, tool decisions, operator support, plus the next step that should happen first.

Scoped is the visible starting point or pricing band for this service. Variable work is priced after the asset, account, timeline, and owner involvement are clear.

Monthly support. Response comes through the quote request path after the context is submitted.

Not as the first move. Submit the situation first so the conversation starts with the real page, campaign, store, or decision instead of a blank sales call.

That is common. The work can review the current setup, direct the internal team, or define what the outside vendor should fix first.

This service answers these pains

Send the situation. Stan Consulting routes it to the right paid review, repair, consulting engagement, build, or advisory call.

Let's talkDiagnostic fit

Use this as a fit check before choosing the service. When the failing layer is unclear, the written diagnostic should come first.

When to use it

Agency, vendor, retainer, or outsourced marketing spend is not producing a clear return. Money risk: The business may renew, fire, or switch vendors before the actual failure layer is known.

What SC checks first

SC checks first-screen message, intent match, proof, CTA visibility, form friction, mobile behavior, and follow-up after the visit.

When to diagnose first

If the account, page, offer, tracking, and follow-up could all be involved, route the decision to the written diagnostic first.